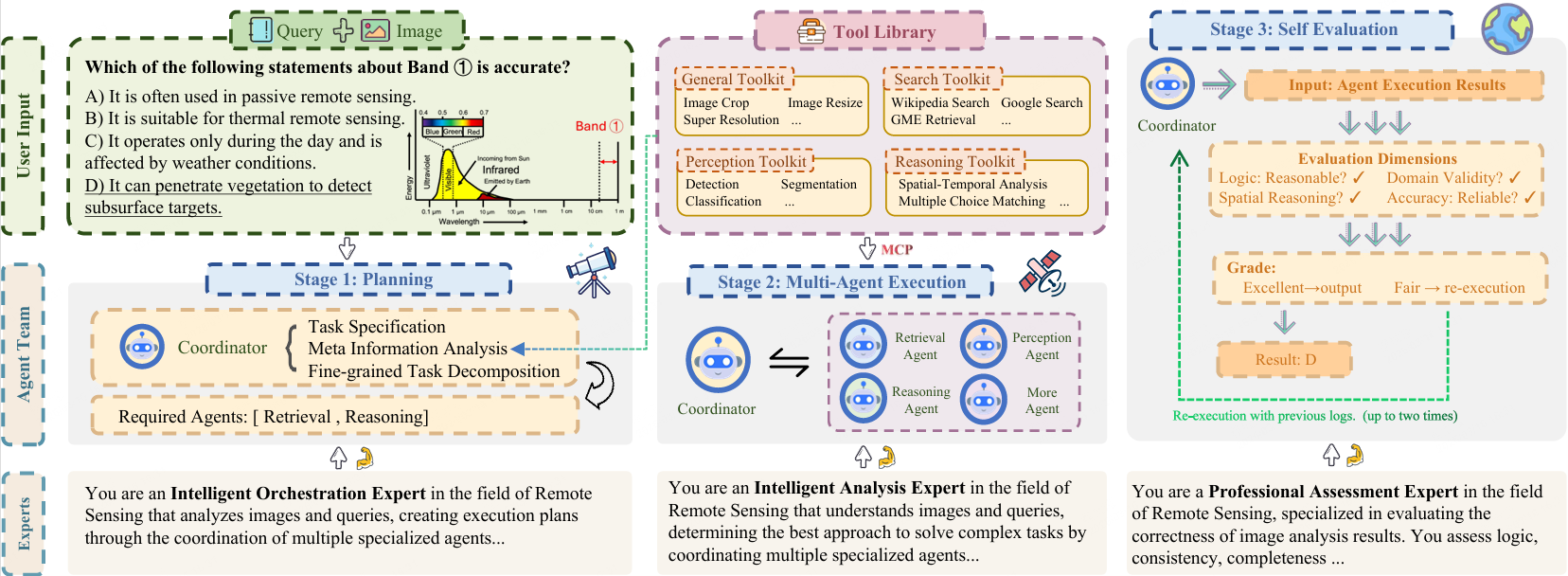

| Toolkit | Capability |

|---|---|

| General | Format conversion, filtering, scaling, optional neural super-resolution, etc. |

| Knowledge | Web search (multi-engine fallback); optional image search; GME text–image similarity for candidate ranking |

| Perception | YOLO11 classification & detection; DeepLabV3+ (Xception) segmentation |

| Reasoning | Multimodal LLM reasoning and in-agent option alignment |

GeoMMBench & GeoMMAgent: A Multimodal Benchmark and Multi-Agent Framework for GeoScience and Remote Sensing

1 RIKEN AIP 2 Wuhan University 3 Linköping University 4 University of Tokyo

5 Nanjing University of Information Science and Technology